- cross-posted to:

- technology@lemmy.world

- cross-posted to:

- technology@lemmy.world

What made me stop is

-

they allowed AI answers. Full stop, I don’t want AI regurgitating AI.

-

They did nothing to improve the community except repeatedly say they’ll improve the community

-

The barrier to entry is still way too high. You can’t post/comment without rep, but you can’t get rep without post/commenting. So people joining struggle to even say hello.

As a engineer who was a power user on StackOverflow, I hate that we are losing a major community that helps coders, beginners or advance, ask questions.

They don’t allow AI answers though?

Other than that, yeah I agree. They needed to switch to curating their content and helping their community and they didn’t.

What made me stop is everything I’ve posted in the last five years has been downvoted and/or closed for the stupidest reasons imaginable. Even a nearly decade old question I posted has recently been downvoted and closed. It’s been true for ages that they have created a culture of elitist rule followers hellbent on following the letter of the rule and not the spirit (and many times even ignoring the letter of the rule just to close things), but nowadays it’s just so much worse.

I’ll write a question. Spend like 30 minutes making sure it’s good and that there aren’t duplicates because I have so much fucking anxiety about getting downvoted and closed. I’ll find similar questions and explain why it’s different. Then when I post? Downvote, closed as duplicate. Commenters being condescending assholes.

Not to mention all the other shit over the years. They’re violating the license everyone contributes under by not allowing the content to be used for certain purposes. Meta has been a joke for ages. They don’t listen or engage.

It’s terrible, I will literally see an answer that is telling me to do something that is straight up not an option or a function available to me. So obvious when people use ai generated answers.

Kind of what happens when you build community, then try to scale for profit.

-

Although we have seen a small decline in traffic, in no way is it what the graph is showing (which some have incorrectly interpreted to be a 50% or 35% decrease). This year [2023], overall, we’re seeing an average of ~5% less traffic compared to 2022.

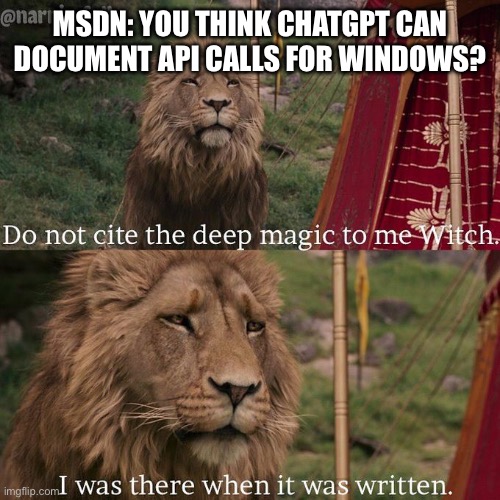

So what do we train gpt on when stack overflow degrades?

Will library docs be enough? Maybe.

Probably public GitHub projects, which may or may not be written using GPT

Absolutely terrifies me.

I asked AI to create an encryption method and it pulled code from 2015.

Smelling funny, I asked some experts. They told me that the AI solution was vulnerable since 2020 and recommended another method.

I feel like the thing that terrifies you is really just idiots with powerful tools. Which have always been around and this is just a new, albeit scarier than normal, tool. The idiot implementing ‘an encryption method whole sale, directly from an ai’ was always going to break shit. They just can do it faster, more easily, and with more devastation. But the idiots were always going to idiot regardless. So it’s up to the non idiots to figure out how to use the same powerful tools to protect everyone(including the idiots themselves) from breaking absolutely everything.

In the weeds here but just trying to say Ai doesn’t kill people, people kill people. But the ai is gonna make it a fuck load easier so we should absolutely put regulation and safeguards in placez

What happened in 2020 that suddenly made that solution vulnerable?

What AI did you use? I feel like most should have (big “should have”) known better since the vulnerability was within it’s cutoff date. Yikes.

In don’t know of there is a huge difference to looking up examples in the docs and pasting them into the code. That’s what people do otherwise, so… :)

Yeah that makes sense. I know people are concerned about recycling AI output into training inputs, but I don’t know that I’m entirely convinced that’s damning.

GIGO.

Yeah I agree garbage in garbage out, but I don’t know that is what will happen. If I create a library, and then use gpt to generate documentation for it, I’m going to review and edit and enrich that as the owner of that library. I think a great many people are painting this cycle in black and white, implying that any involvement from AI is automatically garbage, and that’s fallacious and inaccurate.

Yes, but for every one like you, there’s at least one that doesn’t and just trusts it to be accurate, or doesn’t proof read it well enough and misses errors. It may not be immediate, but that will have a downward effect over time on quality, which likely then becomes a feedback loop.

No matter how good your photocopier is, a copy of a copy is worse, and gets worse everytime you do it.

The theory behind this is that no ML model is perfect. They will always make some errors. So if these errors they make are included in the training data, then future ML models will learn to repeat the same errors of old models + additional errors.

Over time, ML models will get worse and worse because the quality of the training data will get worse. It’s like a game of Chinese whispers.

I think the biggest issue arises in the fact that most new creations and new ideas come from a place of necessity. Maybe someone doesn’t quite know how to do something, so they develop a new take on it. AI removes such instances from the equation and gives you a cookie cutter solution based on code it’s seen before, stifling creativity.

The other issue being garbage in garbage out. If people just assume that AI code works flawlessly and don’t review it, AI will be reinforced on bad habits.

If AI could actually produce significantly novel code and actually “know” what it’s code is doing, it would be a different story, but it mostly just rehashes things with maybe some small variations, not all of which work out of the box.

It may be fine for code, because malformed code won’t compile/run.

It’s extremely bad for image generators, where subtle inconsistencies that people don’t notice will amplify.

SO is already degraded because they didn’t allow new answers even though the old answers are based on old depreciated versions and no longer relevant.

This has been a concern of mine for a long time. People act like docs and code bases are enough, but it’s obvious when looking up something niche that it isn’t. These models need a lot of input data, and we’re effectively killing the source(s) of new data.

It feels like less stack overflow is a narrowing, and that’s kind of where my question comes from. The remaining content for training is the actual authoritative library documentation source material. I’m not sure that’s necessarily bad, it’s certainly less volume, but it’s probably also higher quality.

I don’t know the answer here, but I think the situation is a lot more nuanced than all of the black and white hot takes.

There’s a serious argument that StackOverflow was, itself, a patch job in a technical environment that lacked good documentation and debug support.

I’d argue the mistake was training on StackExchange to begin with and not using an actual stack of manuals on proper coding written by professionals.

The problem was never having the correct answer but sifting out of the overall pool of information. When ChatGPT isn’t hallucinating, it does that much better than Stack Exchange

Stack Overflow mods finally get what they’ve always dreamed of: no more repeat questions.

StackGPT: begins every answer with “closed as duplicate. Here’s a previous answer I provided to this question…”

Well, having a crawler search through all that garbage, ads, questions, wrong answers. And converting that to facts or condensed information…

Just makes so much more sense, also for the environment, I would think. It saves a ton of useless traffic.

But the “AI” part may be problematic.

Yeah, the bullshit generator part is not useful.

One time, chatGPT gave me a code in Python to use a specific Python library. When I said I was coding in Ruby on Rails, it converted the Python code to Ruby syntax.

It literally made up a solution.

So it made you use a python library in Ruby?

also for the environment, I would think. It saves a ton of useless traffic

GPT is worse and it’s not even close.

My PC can serve up a hundred requests per second running an HTTP server with a connected database with 200W power usage

It takes that same computer 30-60s to return a response from a 13B parameter model (WAY less power usage than GPT), while using 400W of power thanks to the GPU

Napkin math, the AI response uses about 10,000x more electricity

Yes that is true. AI is incredibly bad for the environment.

But crawlers, spitting out stuff as text, shouldnt be that complicated tbh. And should save a lot of energy.

The ChatGPT release is close to the SO decision to double down on the moderating rules.

Anyway, where is this data from? This change looks suspiciously intense.

Not that I doubt people have been avoiding it since ChatGPT, but i6 think a part of it is also Google’s partnership with Reddit pushing more search results that way. I’d be curious to see a similar trend regarding Reddit.

SO has been taking a longer time to load for me. Does anyone have the same problem?

I’d like to see the source of this data.

It looks like it’s from https://stackoverflow.com/site-analytics, but I don’t have sufficient karma to see the raw data.