For anyone interested, there are some image poisoning tools out there to protect (glaze as example) your att or even attack (nightshade as examle) ai models directly

AFAIK it’s very model specific attacks and won’t work against other models. Their tool preserving art the same to human eye is great offering, and there’s always rigorous watermarking (esp. with strong contrast) as an universally effective option.

Thank you very much for the additional details^^

Watermarking with strong contrast prevents ai from using the images in training? Since when?

It doesn’t selectively prevents learning, instead it hinders overall recognition even to the human eyes to some extent. The examples I know are old, but artists once tried to gauge model’s capability of i2i from sketches (there’s few instances people took artists’ wip and feed it to genAI to “claim the finished piece”). Watermaking, or constant tiling all over the image worked better to worsen genAI’s recognition than regular noise/dither type filter.

deleted by creator

Open source ai’s shouldn’t be training on art that isn’t given to them either, should it? I get lemmy has a hard on for open source. But “open source” doesn’t give people free reign to steal other people’s work. If the art is freely offered I bet these tools wouldn’t be run on them.

Then people should actually ask for consent for using other’s art as training data

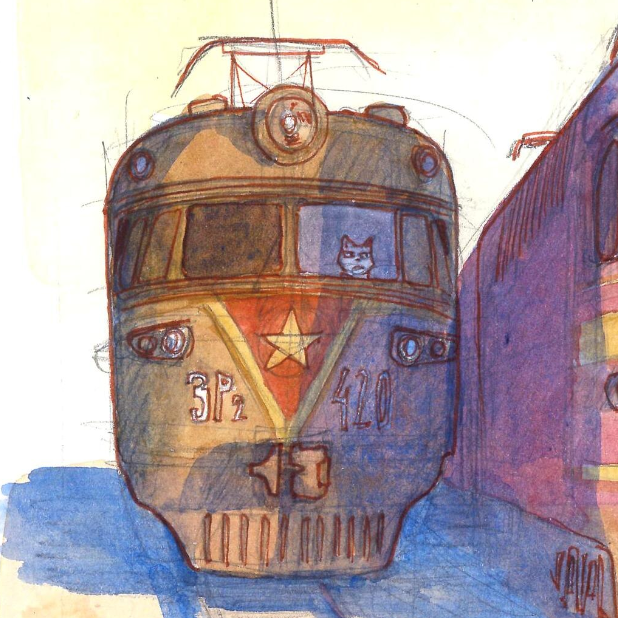

Funnily enough, I’ve found AI has a lot more trouble imitating primitive stick figure art like that than it does more complex art.

It’s not legal

It shouldn’t be. Unfortunately, afaik, no lawsuits have been settled yet. Seems like Anderson v. Stability Ai is the one to watch with regard to OP.

I wish.

at least AI art can’t be copyrighted