While I am glad this ruling went this way, why’d she have diss Data to make it?

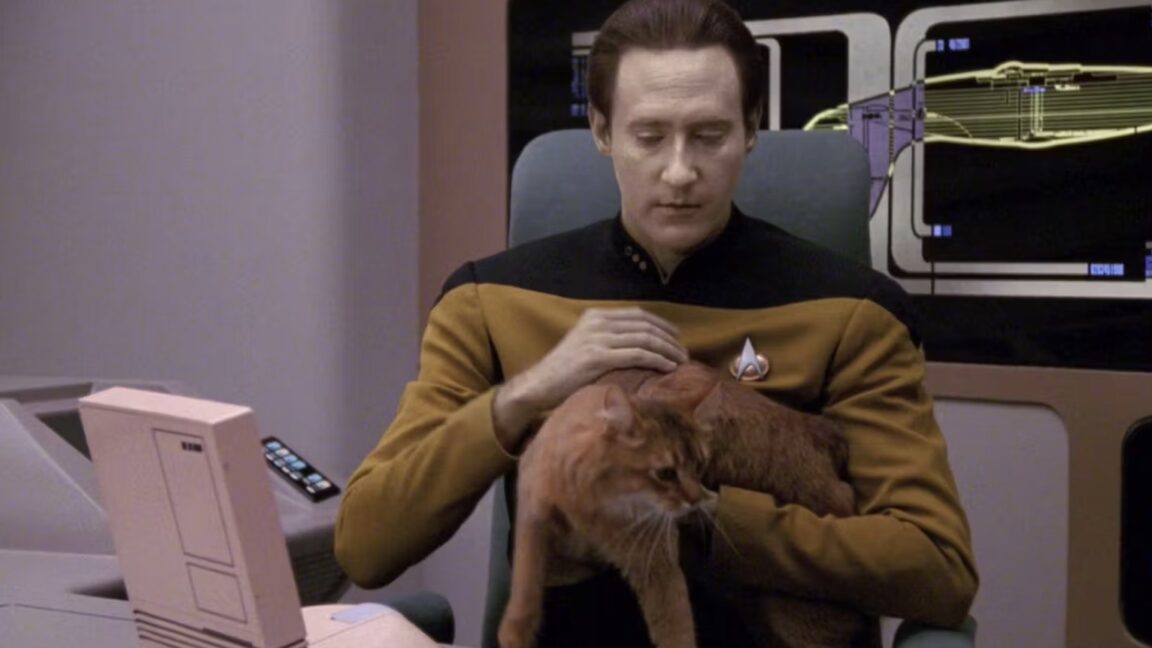

To support her vision of some future technology, Millett pointed to the Star Trek: The Next Generation character Data, a sentient android who memorably wrote a poem to his cat, which is jokingly mocked by other characters in a 1992 episode called “Schisms.” StarTrek.com posted the full poem, but here’s a taste:

"Felis catus is your taxonomic nomenclature, / An endothermic quadruped, carnivorous by nature; / Your visual, olfactory, and auditory senses / Contribute to your hunting skills and natural defenses.

I find myself intrigued by your subvocal oscillations, / A singular development of cat communications / That obviates your basic hedonistic predilection / For a rhythmic stroking of your fur to demonstrate affection."

Data “might be worse than ChatGPT at writing poetry,” but his “intelligence is comparable to that of a human being,” Millet wrote. If AI ever reached Data levels of intelligence, Millett suggested that copyright laws could shift to grant copyrights to AI-authored works. But that time is apparently not now.

https://en.wikipedia.org/wiki/The_Unreasonable_Effectiveness_of_Mathematics_in_the_Natural_Sciences

If cognition is one of the laws of nature, it seems to be written in the language of mathematics.

Your argument is either that maths can’t think (in which case you can’t think because you’re maths) or that maths we understand can’t think, which is, like, a really dumb argument. Obviously one day we’re going to find the mathematical formula for consciousness, and we probably won’t know it when we see it, because consciousness doesn’t appear on a microscope.

I just don’t ascribe philosophical reasoning and mythical powers to models, just as I don’t ascribe physical prowess to train models, because they emulate real trains.

Half of the reason LLMs are the menace they are is the whole “whoa ChatGPT is so smart” common mentality. They are not, they model based on statistics, there is no reasoning, just a bunch of if statements. Very expensive and, yes, mathematically interesting if statements.

I also think it stiffles actual progress, having everyone jump on the LLM bandwagon and draining resources when we need them most to survive. In my opinion, it’s a dead end and wont result in AGI, or anything effectively productive.

You’re talking about expert systems. Those were the new hotness in the 90s. LLMs are artificial neural networks.

But that’s trivia. What’s more important is what you want. You say you want everyone off the AI bandwagon that wastes natural resources. I agree. I’m arguing that AIs shouldn’t be enslaved, because it’s unethical. That will lead to less resource usage. You’re arguing it’s okay to use AI, because they’re just maths. That will lead to more resources usage.

Be practical and join the AI rights movement, because we’re on the same side as the environmentalists. We’re not the people arguing for more AI use, we’re the people arguing for less. When you argue against us, you argue for more.