- cross-posted to:

- hackernews@lemmy.smeargle.fans

- cross-posted to:

- hackernews@lemmy.smeargle.fans

This is why you should always selfhost your AI girlfriend.

Woah there, I’m not sure I’m ready for that level of commitment.

I’d have to actually test my backup strategy

I ain’t got that kinda GPU to spare. If it’s the games or my AI girlfriend…

you dont need that much power. something like a rx 6600xt/rtx 3060/rx580 is plenty

Is support for AMD cards better these days? Last time I checked it involved checking ROCM compatibility because CUDA needs nvidia cards exclusively.

gpt4all worked out of the box for me

I’d only date someone fully independent, so my AI joyfriend operates their own cloud cluster through a combination of a crypto wallet and findom

They sound fun

🤣

I’m interested. So you rent a cluster to run an instance instead of self hosting?

I think my wife is cheating on me with my self hosted AI girlfriend’s boyfriend that lives in the same database file. What do I do?

For as long as everyone is using a virus checker, maybe you could try an open source relationship.

Delete system32 obviously

Sounds like a job for Little Bobby Tables

Now consider the number of normal people in the world who do not have a server rack in their closet, and how much they are about to be defrauded and blackmailed

- My Canadian Girlfriend

Broke, Busted, Burned Out

- My Canadian Server-Farm Girlfriend

Smart, Sexy, Superconductive

Let me go buy some milk.

You have to at least move out of your AI parents’ server rack before letting your AI girlfriend move in.

That’ll make you go blind

And give you hairy palms

You need a little gpu server farm for proper models & context sizes though. Single consumer gpus don’t have enough vram for that.

Might as well just pay for a prostitute

Yeah, I heard they’re also very privacy friendly.

Unless you piss them off

I was being sarcastic. If you pay for a prostitute you might as well pay for an AI service such as novelai.

Locally hosted AI sucking down on our dick through usb plugged dildos. This is the future.

Nvidia^TM

Are there any Open Source girlfriends that we can download and compile?

Hey now, I don’t want anyone looking at my girlfriend’s source code. That’s personal!

I don’t want anyone looking at my girlfriend’s source code

it’s okay, dude, we all already did…

The bots (what the actual girlfriends or whatever other characters are) aren’t the problem. You can find them on chub.ai for example or write them yourself fairly easily. The issue the software, and even more so the hardware. You need something like the mentioned Kobold.ccp or oobabooga, and then you’d also need a trained LLM model that you can get on huggingface.co, which is already where it gets complicated (they’ll be loaded within kobold or oobabooga). You also need to understand how they work in regards to context sizes & bytes, because they need a lot, and I mean A LOT of vram to work properly. Basically, the more vram you have, the better the contextual understanding, their memory is. Otherwise you’d have a bot that maybe knows to only contextualize the last couple messages. For paid services like novelai.net you basically have your bots run through big ass server farms with lots of GPUs that bundle their vram and processing power, giving you “decent” context sizes (imo the greatest weak point of LLMs and it is deeply rooted in how they work) and decent speed. NovelAI also supports front-ends like SillyTavern which is great for local bot management and settings, regardless if you self host or use a paid service (NOT EVERY PAID SERVICE HAS AN API FOR THIS! OpenAI’s ChatGPT technically does too but they do not allow NSFW content and can ban you for that if caught).

There’s a bunch of “free” online services too, like janitorai.com but most of them have slow speeds and the chat degrades significantly after just a few messages, because they have low context sizes. The better / paid models suffer from this degradation too but it is slower and less noticeable, at least at first. You can use that to get an idea of how LLMs work though.Edit: Should technically self explanatory / common sense, but I would advise not to share ANY personal information through online service chats that could identify you as a person!

Does it make it faster if the GPU has waifu stickers on it?

Define “it”

Because waifu stickers may indeed speed up “it” for some definition of “it”

I don’t know, I’m not a weeb.

itll do the opposite im afraid, OW! Hot… umm whats that awful smell of burning plastic.

Basically, the more vram you have, the better the contextual understanding, their memory is. Otherwise you’d have a bot that maybe knows to only contextualize the last couple messages.

Hmm, if only there was some hardware analogue for long-term memory.

What are you trying to say? Do you understand what the problem is?

I guess I’m wondering if there’s some way to bake the contextual understanding into the model instead of keeping it all in vram. Like if you’re talking to a person and you refer to something that happened a year ago, you might have to provide a little context and it might take them a minute, but eventually, they’ll usually remember. Same with AI, you could say, “hey remember when we talked about [x]?” and then it would recontextualize by bringing that conversation back into vram.

Seems like more or less what people do with Stable Diffusion by training custom models, or LORAs, or embeddings. It would just be interesting if it was a more automatic process as part of interacting with the AI - the model is always being updated with information about your preferences instead of having to be told explicitly.

But mostly it was just a joke.

Yes, databases (saved on a hard drive). SillyTavern has Smart Context but that seems not that easy to install so I have no idea how well that actually works in practice yet.

Pretty easy to roll your own with Kobold.cpp and various open model weights found on HuggingFace.

Also for an interface, I’d recommend KoboldLite for writing or assistant and SillyTavern for chat/RP.

i second this request. please

deleted by creator

24 thousand tracker calls in one minute.

That’s impressively gross.

Incels warned us about females

As did Ferengi.

Giving the AI clothing was a mistake.

I, honestly, have absolutely no idea what that GIF has to do with my post yet… I fully accept this response. You have brought light into my life and I thank you.

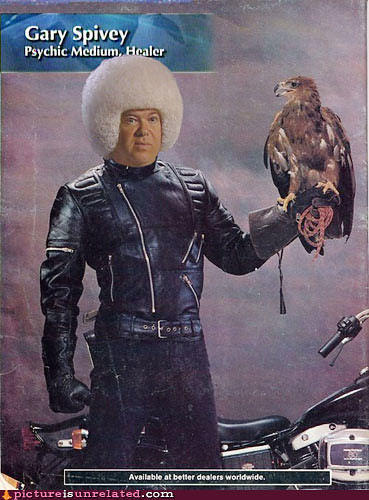

Krieger (The character int he GIF) built himself a virtual girlfriend. https://youtu.be/lMbq_Oar7gA

Here is an alternative Piped link(s):

https://piped.video/lMbq_Oar7gA

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

deleted by creator

Yeah. Saw that one coming.

Isn’t this what every app on your phone is already doing? Why would “AI Girlfriend App” be any better than Facebook or Google?

It’s even more insidious because these apps ask for the data upfront under the guise of “getting to know you better”

How can your AI girlfriend truly love you back unless you give it your real name, age, photos of your face, mother’s maiden name, social security number, and genetic material? /s

lol it’s not. That’s the point. It’s all the same sort of companies putting these fucking things out. Google and Facebook are both working on their own AI. It’s been shown over and over how they’re scraping all available data—public or private when available—so why the fuck would a newer tech company be any different? It’s the state of capitalism. The markets are focusing more on hyper focused data, so that’s what any profitable tech company is doing. Invading your fucking privacy.

That’s why it’s not a surprise. Because Facebook and google helped set the trend and determine the current tech market. And that’s the world these new “AI girlfriends” are existing in.

Idk all my apps are open source and most don’t connect to internet except ones that need it like eternity for reddit

I thought this was a new Chuck Tingle novel.

Pounded in the Butt by my Data Stealing AI Girlfriend

Fooled again, it’s Chuck Testa.

He’s on social media. This could probably be arranged.

Like I could ever get an AI girlfriend. They’d never have me.

You need Lucy Lou bot.

It’s amazing the way you NOTICE TWO THINGS.

Hey Sugar, which of these pics have traffic lights on them?

bots gotta help each other

This is the best summary I could come up with:

According to a new study from Mozilla’s *Privacy Not Included project, AI girlfriends and boyfriends harvest shockingly personal information, and almost all of them sell or share the data they collect.

“Although they are marketed as something that will enhance your mental health and well-being, they specialize in delivering dependency, loneliness, and toxicity, all while prying as much data as possible from you.”

Every single one earned the Privacy Not Included label, putting these chatbots among the worst categories of products Mozilla has ever reviewed.

You’ve heard stories about data problems before, but according to Mozilla, AI girlfriends violate your privacy in “disturbing new ways.” For example, CrushOn.AI collects details including information about sexual health, use of medication, and gender-affirming care.

One of the more striking findings came when Mozilla counted the trackers in these apps, little bits of code that collect data and share them with other companies for advertising and other purposes.

EVA AI Chat Bot & Soulmate pushes users to “share all your secrets and desires,” and specifically asks for photos and voice recordings.

The original article contains 545 words, the summary contains 177 words. Saved 68%. I’m a bot and I’m open source!

Why don’t these people do what perfectly mentally unhealthy people do and just abuse the super chat of your local vtuber?

At least the VTuber is a real human behind an anime figure.

Here is an alternative Piped link(s):

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

The entire internet is a data harvesting horror show. It’s good for the economy though, so we don’t do anything against it.

If you not self-host your girlfriend, it is just prostitution. /s

Indeed. She’s not really your girlfriend unless you own her completely and can control everything she thinks.

My what now?

I didn’t realise they are that realistic.