tldr: I’d like to set up a reverse proxy with a domain and an SSL cert so my partner and I can access a few selfhosted services on the internet but I’m not sure what the best/safest way to do it is. Asking my partner to use tailscale or wireguard is asking too much unfortunately. I was curious to know what you all recommend.

I have some services running on my LAN that I currently access via tailscale. Some of these services would see some benefit from being accessible on the internet (ex. Immich sharing via a link, switching over from Plex to Jellyfin without requiring my family to learn how to use a VPN, homeassistant voice stuff, etc.) but I’m kind of unsure what the best approach is. Hosting services on the internet has risk and I’d like to reduce that risk as much as possible.

-

I know a reverse proxy would be beneficial here so I can put all the services on one box and access them via subdomains but where should I host that proxy? On my LAN using a dynamic DNS service? In the cloud? If in the cloud, should I avoid a plan where you share cpu resources with other users and get a dedicated box?

-

Should I purchase a memorable domain or a domain with a random string of characters so no one could reasonably guess it? Does it matter?

-

What’s the best way to geo-restrict access? Fail2ban? Realistically, the only people that I might give access to live within a couple hundred miles of me.

-

Any other tips or info you care to share would be greatly appreciated.

-

Feel free to talk me out of it as well.

EDIT:

If anyone comes across this and is interested, this is what I ended up going with. It took an evening to set all this up and was surprisingly easy.

- domain from namecheap

- cloudflare to handle DNS

- Nginx Proxy Manager for reverse proxy (seemed easier than Traefik and I didn’t get around to looking at Caddy)

- Cloudflare-ddns docker container to update my A records in cloudflare

- authentik for 2 factor authentication on my immich server

Caddy with cloudflare support in a docker container.

This the solution.

Caddy is simple.

Does Caddy have an OWASP plugin like nginx?

I don’t use it, but it looks like yes.

I currently have a nginx docker container and certbot docker container that I have working but don’t have in production. No extra features, just a barebones reverse proxy with an ssl cert. Knowing that, I read through Caddy’s homepage but since I’ve never put an internet facing service into production, it’s not obvious to me what features I need or what I’m missing out on. Do you mind sharing what the quality of life improvements you benefit from with Caddy are?

Honestly, if you know nginx just stick with it. There’s nothing to be gained by learning a new proxy.

Use Mozilla’s SSL generator if you want to harden nginx (or any proxy you choose)- https://ssl-config.mozilla.org/

I didn’t know about that tool. Thanks for sharing

I never went too far down the nginx route, so I can’t really compare the two. I ended up with caddy because I self-host vaultwarden and it really doesn’t like running over http (for obvious reasons) and caddy was the instruction set I found and understood first.

I don’t make a lot of what I host available to the wider internet, for the ones that I do, I recently migrated to using a Cloudflare tunnel to deal with the internet at large, but still have it come through caddy once it hits my server to get ssl. For everything else I have a headscale server in Oracle’s free tier that all my internal services connect to.

What caddy does are automatic certs. You set up your web-portal and make a wildcard subdoman that points to your portal. Then you just enter two lines in the config and your new app is up. Lets say you want to put your hone assistant there. You could add hass.portal.domain.tld {reverse_proxy internal.ip:8123 } and it works. Possible with other setups too, but its no hassle

How do you handle SSL certs and internet access in your setup?

I have NPM running as “gateway” between my LAN and the Internet and let handle it all of my vertificates using the built-in Let’s Encrypt features. None of my hosted applications know anything about certificates in their Docker containers.

As for your questions:

- You can and should – it makes managing the applications much easier. You should use some containerization. Subdomains and correct routing will be done by the reverse proxy. You basically tell the proxy “when a request for foo.example.com comes in, forward it to myserver.local, port 12345” where 12345 is the port the container communicates over.

- 100% depends on your use case. I purchased a domain because I host stuff for external access, too. I just have my setup to report it’s external IP address to my domain provider. It basically is some dynamic DNS service but with a “real domain”. If you plan to just host for yourself and your friends, some generic subdomain from a dynamic DNS service would do the trick. (Using NPMs Let’s Encrypt configuration will work with that, too.)

- You can’t. Every georestricting can be circumvented. If you want to restrict access, use HTTP basic auth. You can set that up using NPM, too. So users authenticate against NPM and only when it was successful,m the routing to the actual content will be done.

- You might want to look into Cloudflare Tunnel to hide your real IP address and protect against DDoS attacks.

- No 🙂

“NPM” node package manager?

- Yeah I’ve been playing around with docker and a domain to see how all that worked. Got the subdomains to work and everything, just don’t have them pointing to services yet.

- I’m definitely interested in the authentication part here. Do you have an tutorials you could share?

- Will do, thanks

- ❤️

I don’t know how markdown works. that should be 1,3,4,5

nginx proxy manager

I was reading this and thinking node package manager too and I was both confused and concerned that somebody would sit all of their security on node package manager!

That makes much more sense 🙂

there’s so many acronyms. Thanks

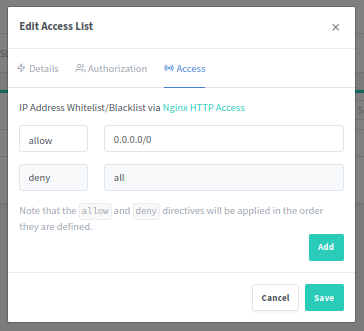

Authentication with NPM is pretty straightforward. You basically just configure an ACL, add your users, and configure the proxy host to use that ACL.

I found this video explaining it: https://youtu.be/0CSvMUJEXIw?t=62

NPM unfortunately has a long-term bug since 2020, that needs you to add a specific configuration when setting up the ACL as shown in the video.

At the point where he is on the “Access” tab with all the allow and deny entries, you need to add an allow entry with

0.0.0.0/0as IP address.

Other than that, the setup shown in the video works in the most recent version.

or a domain with a random string of characters so no one could reasonably guess it? Does it matter?

That does not work. As soon as you get SSL certificates, expect the domain name to be public knowledge, especially with Let’s Encrypt and all other certificate authorities with transparency logs. As a general rule, don’t rely on something to be hidden from others as a security measure.

deleted by creator

Damn, I didn’t realize they had public logs like that. Thanks for the heads up

https://crt.sh/ would make anyone who thought obscurity would be a solution poop themselves.

5). Hey OP, don’t worry, this can seem kind of scary at first, but it is not that difficult. I’ve skimmed some of the other comments and there are plenty of good tips here.

2). Yes, you will want your own domain and there is no fear of other people “knowing it” if you have everything set up correctly.

1b). Any cheap VPS will do and you don’t need to worry about it being virtualized rather than dedicated. What you really care about is bandwidth speed and limits because a reverse proxy is typically very light on resources. You would be surprised how little CPU/memory it needs.

1a). I use a cheap VPS from RackNerd. Once you have access to your VPS, just install your proxy directly into the OS or in Docker. Whichever is easier. The most important thing for choosing a reverse proxy is automatic TLS/Let’s Encrypt. I saw a comment from you about certbot… don’t bother with all that nonsense. Either Traefik, Caddy, or Nginx Proxy Manager (not vanilla Nginx) will do all this for you–I personally use Traefik unless for some reason I can’t. Way less headaches. The second most important thing to decide is how your VPS in the cloud will connect back to your home securely… I personally use Tailscale for that and it works perfectly fine.

3). Honestly, I think Fail2Ban and geo restrictions are overdoing it. Fail2ban has never gotten me any lift because any sort of modern brute force attack will come from a botnet that has 1000s of unique IPs… never triggering Fail2ban because no repeat offenders. Just ensure your VPS has a firewall enabled and you know what ports you are exposing from Docker and you should be good. If your services don’t natively support authentication, look into something like Authelia or Authentik. Rather than Fail2Ban and/or geo restrictions, I would be more inclined to suggest a WAF like Caddy WAF before I reached for geo restrictions. Again, assuming your concern is security, a WAF would do way more for you than IP restrictions which are easily circumvented.

4). Have fun!

EDIT: formatting

I appreciate the info, thanks

If security is one of your concerns, search for “HTTP client side certificates”. TL;DR: you can create certificates to authenticate the client and configure the server to allow connections only from trusted devices. It adds extra security because attackers cannot leverage known vulnerabilities on the services you host since they are blocked at http level.

It is a little difficult to find good and updated documentation but I managed to make it work with nginx. The downside is that Firefox mobile doesn’t support them, but Firefox PC and Chrome have no issues.

Of course you want also a server side certificate, the easiest way is to get it from Let’s Encrypt

That’s interesting, I didn’t know that was a thing. I’ll look into it, thanks!

I remember that I started by following these two guides.

https://fardog.io/blog/2017/12/30/client-side-certificate-authentication-with-nginx/

https://stackoverflow.com/questions/7768593/

something I’m not sure it is mentioned here is that android (at lest the version on my phone) accepts only a legacy format for certificates and the error message when you try to import the new format is totally opaque. If you cannot import it there just check openssl flags to change the export format.

For point number 2, security through obscurity is not security.

Besides, all issued certificates are logged publicly. You can search them here https://crt.sh/Nginx Proxy Manager is easy to set up and will do LE acme certs, has a nice GUI to manage it.

If it’s just access to your stuff for people you trust, use tailscale or wireguard (or some other VPN of your choice) instead of opening ports to the wild internet.

Much less riskI use a central nginx container to redirect to all my other services using a wildcard let’s encrypt cert for my internal domain from acme.sh and I access it all externally using a tailscale exit node. The only publicly accessible service that I run is my Lemmy instance. That uses a cloudflare tunnel and is isolated in it’s own vlan.

TBH I’m still not really happy having any externally accessible service at all. I know enough about security to know that I don’t know enough to secure against much anything. I’ve been thinking about moving the Lemmy instance to a vps so it can be someone else’s problem if something bad leaks out.

Don’t fret, not even Microsoft does.

You’re not as valuable as a target as Microsoft.

It’s just about risk tokerance. The only way to avoid risk is to not play the game.

wildcard let’s encrypt cert

I know what “wildcard” and “let’s encrypt cert” are separately but not together. What’s going on with that?

How do you have your tailscale stuff working with ssl? And why did you set up ssl if you were accessing via tailscale anyway? I’m not grilling you here, just interested.

I know enough about security to know that I don’t know enough to secure against much anything

I feel that. I keep meaning to set up something like nagios for monitoring and just haven’t gotten around to it yet.

So when I ask Let’s Encrypt for a cert, I ask for *.int.teuto.icu instead of specifically jellyfin.int.teuto.icu, that way I can use the same cert for any internally running service. Mostly I use SSL on everything to make browsers complain less. There isn’t much security benefit on a local network. I suppose it makes harder to spoof on an external network, but I don’t think that’s a serious threat for a home net. I used to use home.lan for all of my services, but that has the drawback of redirecting to a search by default on most browsers. I have my tailscale exit node running on my router and it just works with SSL like anything else.

Ok so I currently have a cert set up to work with:

www.domain.com (some browsers seemingly didn’t like it if I didn’t have www)

Are you saying I could just configure it like this:

*.domain.com

The idea of not having to keep updating the cert with new subdomains (and potentially break something in the process) is really appealing

Yes. If you’re using lets encrypt then note that they do not support wildcard certs with the HTTP-01 challenge type. You will need to use the DNS-01 challenge type. To utilize it you would need a domain registrar that supports api dns updates like cloudflare and then you can use the acme.sh package. Here is an example guide i found.

Note that you could still request multiple explicit subdomains in the same issue/renew commands so it’s not a huge deal either way but the wildcard will be more seamless in the future if you don’t know what other services you might want to selfhost.

awesome, thanks for the info

It doesn’t improve security much to host your reverse proxy outside your network, but it does hide your home IP if you care.

If your app can exploited over the web and through a proxy it doesn’t matter if that proxy is on the same machine or over the network.

A fairly common setup is something like this:

Internet -> nginx -> backend services.

nginx is the https endpoint and has all the certs. You can manage the certs with letsencrypt on that system. This box now handles all HTTPS traffic to and within your network.

The more paranoid will have parts of this setup all over the world, connected through VPNs so that “your IP is safe”. But it’s not necessary and costs more. Limit your exposure, ensure your services are up-to-date, and monitor logs.

fail2ban can give some peace-of-mind for SSH scanning and the like. If you’re using certs to authenticate rather than passwords though you’ll be okay either way.

Update your servers daily. Automate it so you don’t need to remember. Even a simple “doupdates” script that just does “apt-get update && apt-get upgrade && reboot” will be fine (though you can make it more smart about when it needs to reboot). Have its output mailed to you so that you see if there are failures.

You can register a cheap domain pretty easily, and then you can sub-domain the different services. nginx can point “x.example.com” to backend service X and “y.example.com” to backend service Y based on the hostname requested.

I would recommend automating only daily security updates, not all updates.

Ubuntu and Debian have “unattended-upgrades” for this. RPM-based distros have an equivalent.

Agree - good point.

Why is it too much asking your partner to use wireguard? I installed wireguard for my wife on her iPhone, she can access everything in our home network like she was at home, and she doesn’t even know that she is using VPN.

A few reasons

- My partner has plenty of hobbies but sys-admin isn’t one of them. I know I’ll show them how to turn off wireguard to troubleshoot why “the internet isn’t working” but eventually they would forget. Shit happens, sometimes servers go down and sometimes turning off wireguard would allow the internet to work lol

- I’m a worrier. If there was an emergency, my partner needed to access the internet but couldn’t because my DNS server went down, my wireguard server went down, my ISP shit the bed, our home power went out, etc., and they forgot about the VPN, I’d feel terrible.

- I was a little too ambitious when I first got into self hosting. I set up services and shared them before I was ready and ended up resetting them constantly for various reasons. For example, my Plex server is on it’s 12th iteration. My partner is understandably weary to try stuff I’ve set up. I’m at a point where I don’t introduce them to a service I set up unless accessing it is no different than using an app (like the Homeassistant app) or visiting a website. That intermediary step of ensuring the VPN is on and functional before accessing the service is more than I’d prefer to ask of them

Telling my partner to visit a website seems easy, they visit websites every day, but they don’t use a VPN everyday and they don’t care to.

deleted by creator

I get where the original commenter is coming from. A VPN is easy to use, why not have my partner just use the VPN? But like, try adding something to your routine that you don’t care about or aren’t interested in. It’s an uphill battle and not every hill is worth dying on.

All that to say, I appreciate your comment.

- I don’t think this is a problem with tailscale but you should check. Also you don’t have to pipe all the traffic through your tunnel. In the allowed IPs you can specify only your subnet so that everything else leaves via the default gateway.

- in the DNS server field in your WireGuard config you can specify anything, doesn’t have to be RFC1918 compliant. 1.1.1.1 will work too

- At the end of the day, a threat model is always gonna be security vs. convenience. Plex was used as an attack vector in the past as most most people don’t rush to patch it (and rightfully so, there are countless horror stories of PMS updates breaking the whole thing entirely). If you trust that you know what you’re doing, and trust the applications you’re running to treat security seriously (hint: Plex doesn’t) then go ahead, set up your reverse proxy server of choice (easiest would be Traefik, but if you need more robustness then nginx is still king) and open 443 to the internet.

Tailscale is very popular among people I know who have similar problems. Supposedly it’s pretty transparent and easy to use.

If you want to do it yourself, setting up dyndns and a wireguard node on your network (with the wireguard udp port forwarded to it) is probably the easiest path. The official wireguard vpn app is pretty good at least for android and mac, and for a linux client you can just set up the wireguard thing directly. There are pretty good tutorials for this iirc.

Some dns name pointing to your home IP might in theory be an indication to potential hackers that there’s something there, but just having an alive IP on the internet will already get you malicious scans. Wireguard doesn’t respond unless the incoming packet is properly signed so it doesn’t show up in a regular scan.

Geo-restriction might just give a false sense of security. Fail2ban is probably overkill for a single udp port. Better to invest in having automatic security upgrades on and making your internal network more zero trust

Nginx Proxy Manager + LetsEncrypt.

I use nginx proxy manager and let’s encrypt with a porkbun domain, was very easy to set up for me. Never tried caddy/traefik/etc though. Geo blocking happens on my OPNsense with the built in tools.

Do you have instructions on how you set that up?

At a high level you forward ports 80 and 443 to NPM from your router. In NPM you set up your proxy by IP address and port and you can also set up automatic SSL certs when you create the proxy via letsencrypt. I also run a DDNS auto update that tells porkbun if my IP changes. I’d be happy to get into some more specifics if there’s a particular spot you’re stuck. This is all assuming you have a public IPv4 and aren’t behind cgnat. If you have cgnat you’re not totally fucked but it makes it more complicated. If it’s OPNsense related struggles that shit is mysterious to me, I’ve only been running it a few weeks and it’s not fully configured. Still learning.

Why am I forwarding all http and https traffic from WAN to a single system on my LAN? Wouldn’t that break my DNS?

You would be forwarding ingress traffic(traffic not originating from your internal network) to 443/80, this doesn’t affect egress requests(requests from users inside your network requesting external sites) so it wouldn’t break your internal DNS resolution of sites. All traffic heading to your router from outside origins would be pushed to your reverse proxy where you can then route however you please to whatever machine/port your apps live on.

The reverse proxy is th single system because it tells the incoming traffic where to go. It also doesn’t really do anything unless the incoming traffic is requesting one of the domains you set up. it doesn’t affect your internal DNS. You are able to redirect from the public address to your internal server through DNS though.

Either tailscale or cloudflare tunnels are the most adapted solution as other comments said.

For tailscale, as you already set it up, just make sure you have an exit node where your services are. I had to do a bit of tinkering to make sure that the ips were resolved : its just an argument to the tailscale command.

But if you dont want to use tailscale because its to complicated to your partner, then cloudlfare tunnels is the other way to go.

How it works is by creating a tunnel between your services and cloudlare, kind of how a vpn would work. You usually use the cloudlfared CLI or directly throught Cloudflare’s website to configure the tunnel. You should have a DNS imported to cloudflare by the way, because you have to do a binding such as : service.mydns.com -> myservice.local Cloudlfare can resolve your local service and expose it to a public url.

Just so you know, cloudlfare tunnels are free for some of that usage, however cloudlfare has the keys for your ssl traffic, so they in theory could have a look at your requests.

best of luck for the setup !

Thanks for the info, I appreciate it

deleted by creator

ISPs shouldn’t care unless it is explicitly prohibited in the contract. (I’ve never seen this)

I still wouldn’t expose anything locally though since you would need to pay for a static IP.

Instead, I just use a VPS with Wireguard and a reverse proxy.

Do you mind giving a high level overview of what a Cloudlfare tunnel is doing? Like, what’s connected to what and how does the data flow? I’ve seen cloudflare mentioned a few other times in the comments here. I know Cloudflare offers DNS services via their 1.1.1.1 and 1.0.0.1 IPs and I also know they somehow offer DDoS protection (although I’m not sure how exactly. caching?). However, that’s the limit of my knowledge of Cloudflare

deleted by creator